ost corporate training programs die quietly. They launch to a flurry of internal Slack announcements, hit 60% completion in the first week, then flatline. Six months later, nobody can tell you what changed because of them.

I've seen this pattern more times than I'd like to admit. The content wasn't bad. The intent was right. What failed was the design — specifically, how the program was built before anyone touched a course authoring tool.

Creating a corporate training program that people actually use requires more than packaging knowledge into modules. It requires a clear diagnosis of the problem you're solving, a structure that fits how your employees actually work, and a delivery method they won't abandon after day three.

Here's how to build one that holds together.

What Is a Corporate Training Program?

A corporate training program is a structured set of learning activities designed to develop specific skills or knowledge in employees. That's the textbook version. The practical version: it's a deliberate effort to change how people work, with enough structure to make that change measurable.

There are different corporate training program types that can be mandatory (compliance, onboarding) or optional (upskilling, leadership development). They can run over a few hours or several months. What distinguishes a program from a one-off training session is intentional sequencing — content builds on itself, and there's a defined outcome you're working toward.

The word "program" matters here. It implies design, not just delivery.

Step 1: Define the Business Problem You're Actually Solving

I'd argue this is where 80% of programs go wrong before they start. Someone senior decides employees need communication training. Or resilience. Or digital skills. A broad theme gets turned into a learning brief, and then suddenly you have a 6-module course on "Effective Communication in the Workplace" that no one specifically asked for.

Before designing anything, you need a crisper problem statement. Not "we need to improve customer service skills", but "our support team's average resolution time is 9 minutes, and the top performers average 6. What do those six people know that the others don't?"

That specificity changes everything downstream. It tells you who needs to learn what, which means you can measure whether learning actually happened.

A good problem statement for a corporate training program answers three questions: What's the performance gap? Who has it? What's the cost of not fixing it?

Step 2: Run a Needs Analysis Before You Touch Any Content

Designing a corporate training program without a needs analysis is like building an app without user research. You'll build something, it just won't be right.

A proper needs analysis combines two things: data and conversations. Pull whatever performance metrics you have — sales numbers, ticket resolution rates, error logs, manager ratings. Then talk to the people closest to the work: team leads, high performers, and people who've recently been through the relevant process for the first time.

What you're listening for isn't just knowledge gaps. You're listening for skill gaps (they know what to do but can't do it under pressure), motivation gaps (they know and could, but don't), and environment gaps (they'd do it differently if their tools or workflow allowed). Training fixes the first category. It doesn't fix the second or third, and pretending otherwise wastes everyone's time.

⚡ One thing I consistently find underused: exit interview data. If people leaving a role consistently mention they weren't prepared for a specific part of the job, that's your training gap, named for you.

Step 3: Set Objectives That Are Actually Measurable

Once you've diagnosed the problem, the objectives for your corporate training program need to describe observable behaviour, not internal states.

"Employees will understand the importance of data privacy" is not a measurable objective. "Employees will correctly classify sensitive data types in 95% of test scenarios" is.

I use a modified version of Bloom's Taxonomy for this, skipping straight past "awareness" and "understanding" to action verbs: apply, analyse, evaluate, create. If your objective uses a passive or vague verb (understand, appreciate, be aware of) rewrite it.

⚡The number of objectives matters too. Three to five per program is the right range for most corporate training. More than that, and you're either describing a curriculum, not a program, or you've not been ruthless enough about scope.

Step 4: Choose the Right Format for the Content and the Audience

Corporate training format decisions get made too late in the process. Most teams default to eLearning because it scales, and that's a reasonable starting point, but it's not always right.

Here's how I'd frame the decision:

- Self-paced eLearning — works well for knowledge transfer and compliance topics where the content is stable and the audience is distributed. Not ideal when the skill requires feedback on practice.

- Cohort-based live sessions — better when the learning is collaborative, when discussion matters, or when you're building a shared mental model across a team.

- Blended — the most effective for complex skills, but requires more planning. You need to be clear about what happens asynchronously versus what requires a facilitator.

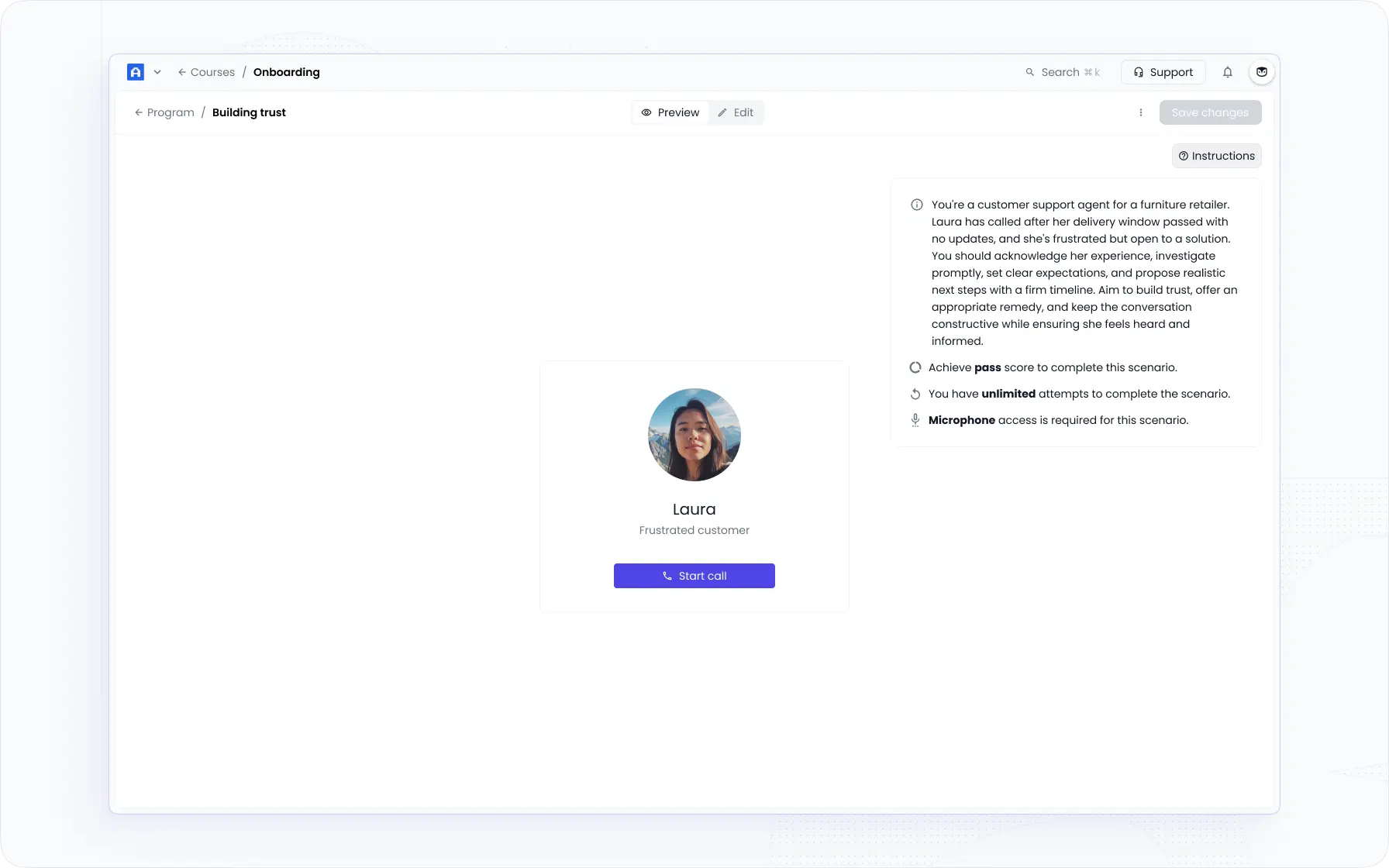

- AI Roleplay and scenario practice — underused and increasingly practical. For skills like sales conversations, customer calls, or difficult feedback, scenario-based practice with structured rubric feedback closes the gap between "knowing" and "doing" faster than any other format I've used.

The honest answer is that most corporate training programs would benefit from being shorter and more practice-heavy, rather than longer and more content-heavy.

Step 5: Build the Learning Path Structure

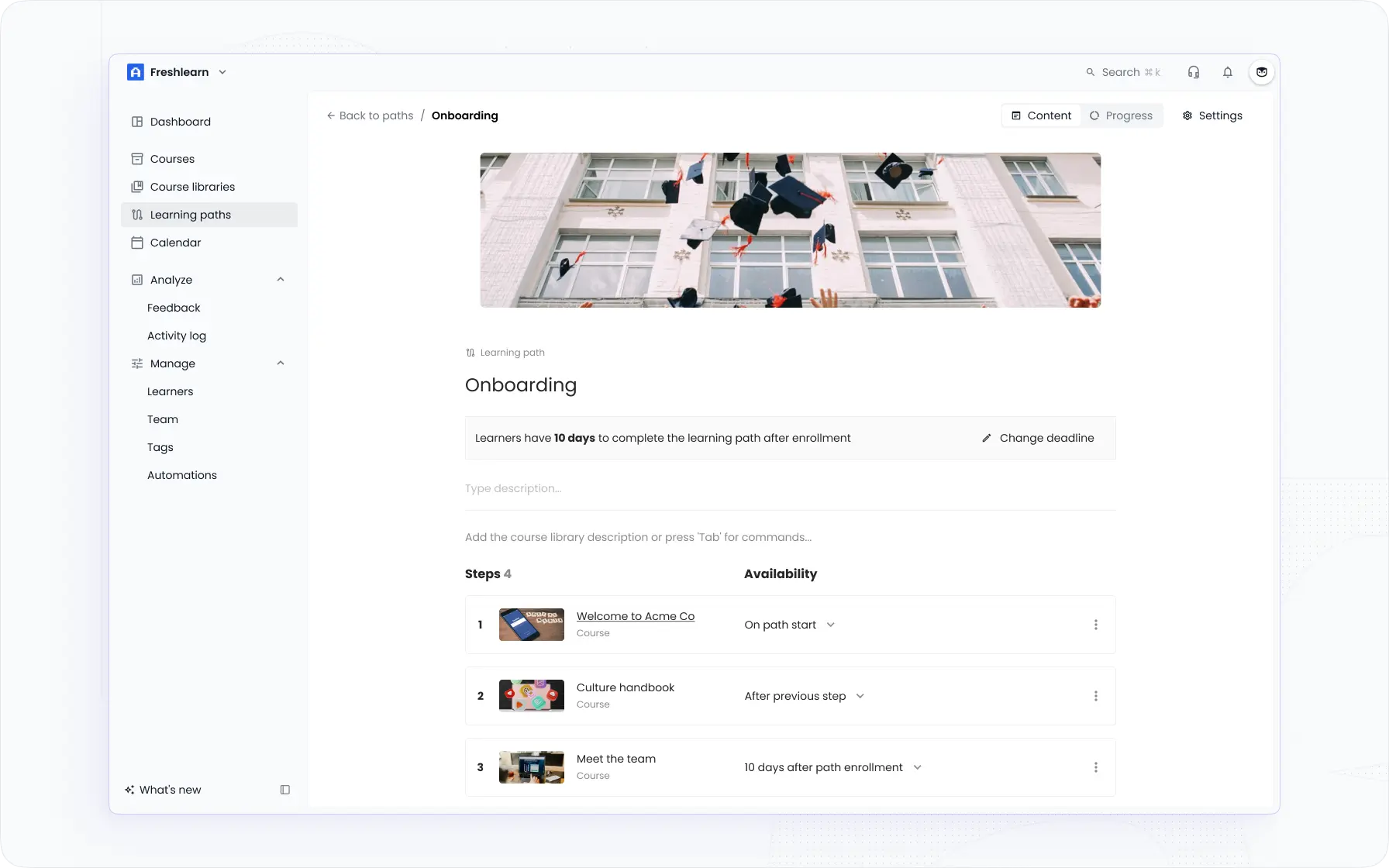

Once format is settled, structure it. A learning path for a corporate training program typically follows one of three patterns:

Linear — every learner goes through the same sequence in the same order. Useful for onboarding and compliance, where sequence matters.

Branching — learners follow different paths based on role, seniority, or prior knowledge assessment. Adds complexity but dramatically improves relevance.

Hub-and-spoke — a core set of required modules, with optional depth available for those who want it. Good for skill development programs where baseline + specialisation is the goal.

For most programs at companies with 100–500 employees, I'd start with linear and add branching only where you've confirmed the need through your role analysis. The temptation is to build the sophisticated structure first. The better move is to get a working program in front of learners, then adapt.

One structural decision that gets ignored: what happens after completion? If there's no reinforcement (no manager check-in, no spaced repetition, no practice opportunity) the learning evaporates within two weeks. Ebbinghaus showed us this in 1885. We've spent the 140 years since mostly ignoring it.

Step 6: Build It — With a Realistic Timeline

Here's what building a corporate training program actually takes, roughly:

That's 7–12 weeks for a program of moderate scope. I've seen teams try to compress this to three weeks. It usually results in a course that goes through seven revision cycles post-launch instead of one controlled pilot.

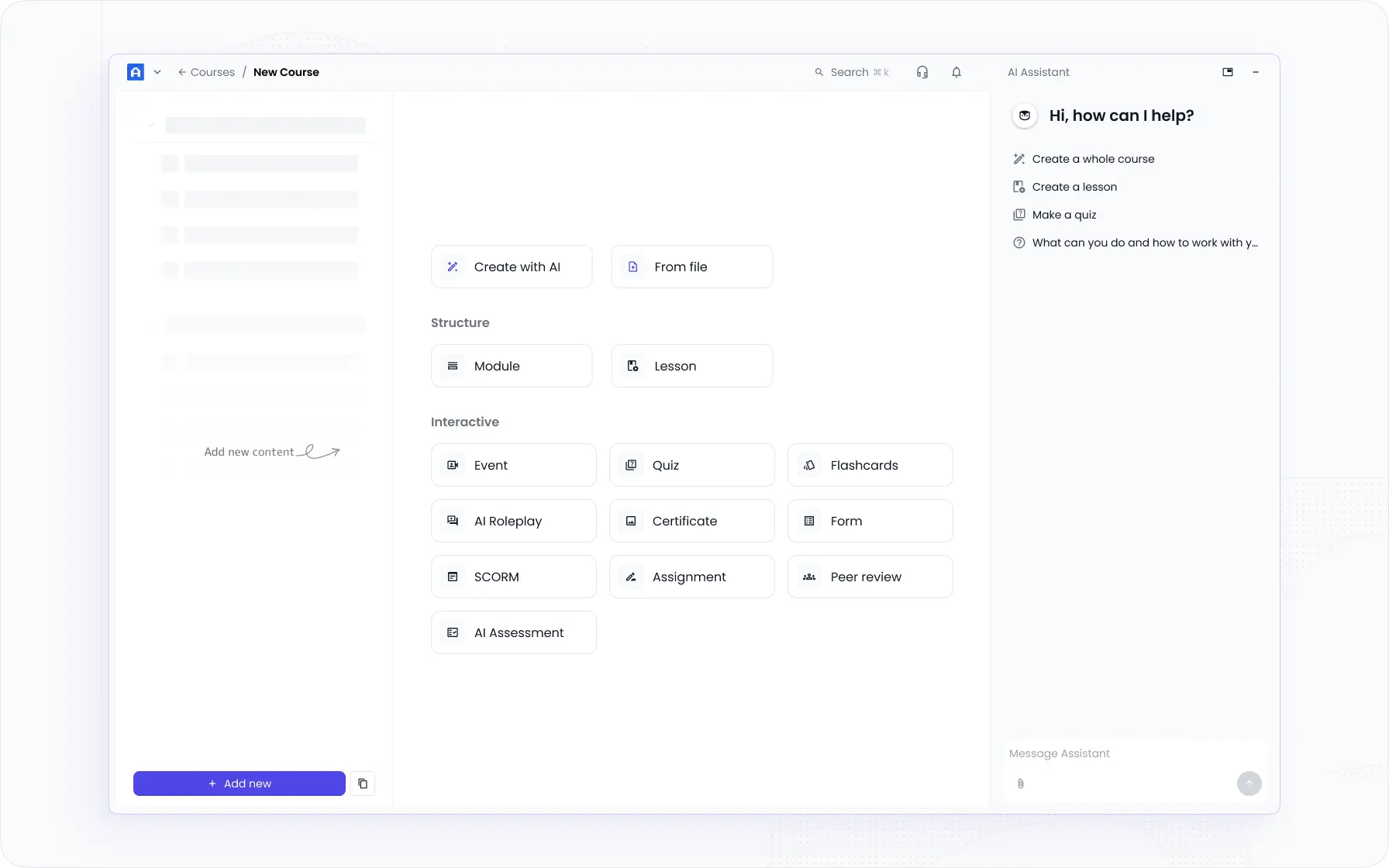

The development phase is where most time gets lost. If you're building content from scratch without an AI-assisted authoring tool, allow more time. Platforms like EducateMe can generate a full course draft from a prompt, URL, or document in a fraction of that time.

Step 7: Pilot Before You Launch

I'm going to be direct here: skipping the pilot is almost always a mistake.

A pilot group of 10–20 people will surface structural issues, navigation confusion, pacing problems, and content gaps that no internal review process will catch. Run them through the full program. Give them a short debrief survey. Talk to three or four of them directly.

The questions I ask in a pilot debrief: Was anything unclear? What felt too slow or too fast? What felt missing? Would you change how you'd do your job based on this? That last question is the one that tells you whether the program is working.

Step 8: Launch, and Make the Manager Part of It

The single most consistent predictor of training program success I've seen is whether managers are involved. Not just briefed — involved. If a manager knows what their team is learning, why it matters, and has a simple way to reinforce it in 1-on-1s, completion rates go up and behaviour change actually happens.

Send managers a one-page brief before launch: what the program covers, what changed behaviour looks like, two or three questions they can ask in conversation. That's not a lot of effort, but the difference it makes is significant.

Step 9: Measure Outcomes

Completion rates are not a measure of program effectiveness. They're a measure of program access. I say this having worked with L&D teams who report 90% completion to leadership as evidence the training worked, when nobody tracked whether performance actually changed.

For a proper corporate training program, you need at least two levels of measurement of employee performance: learning (did knowledge or skill improve, measured by assessment or observation?) and behaviour (did people do things differently on the job?). The third level — business results — is harder to isolate, but tracking the performance gap from Step 1 over time gives you a reasonable proxy.

If you built your objectives correctly in Step 3, your measurement framework almost writes itself.

Key Takeaways

Creating a corporate training program that holds up requires a real diagnosis before any design starts. The sequence matters: problem → analysis → objectives → format → structure → build → pilot → launch → measure. Skipping any of these steps doesn't save time — it just moves the cost further down the line, where it's harder to fix.

The programs I've seen work best share one trait: they're built for a specific performance gap, not for a general topic. When you can name the gap precisely, the rest of the design follows naturally.

If you're at the stage of choosing where to run your program, I've put together a guide to the best LMS platforms for corporate training — worth a look before you commit to a tool.

Frequently asked questions

What's the difference between a training program and a training course?

A training course is typically a single learning experience focused on one topic. A training program is a broader initiative made up of multiple courses, activities, or modules designed to address a defined performance gap over time. Programs have a beginning, middle, and end — and usually include assessment, practice, and some form of reinforcement after the content is done.

How long does it take to create a corporate training program?

A well-scoped program typically takes 7–12 weeks from needs analysis to launch — longer if content is complex or stakeholder review cycles are slow. The development phase is the biggest variable. Using an AI-powered platform like EducateMe can cut course creation time significantly; some teams complete the build phase in days rather than weeks, especially for structured onboarding or compliance content.

What should I include in a corporate training program?

At minimum: clear learning objectives tied to a business outcome, structured content in a format that fits the audience, practice opportunities or assessments, and a plan for reinforcement after completion. The specifics depend on the performance gap you're addressing. Most programs also benefit from a manager communication plan — training without any manager involvement tends to have lower transfer to the job.

How do I know if a corporate training program is working?

Completion rate is the wrong answer — it tells you people started, not that anything changed. Measure at two levels: did learners improve on assessments or observed skill (learning), and did their behaviour change on the job (transfer)? If you defined your objectives with action verbs and measurable outcomes, your evaluation criteria are already built in. Revisit the original performance gap 30, 60, and 90 days post-launch.